Beyond the Buzzwords: Baking Measurable ESG into Your AI CI/CD Pipeline

Learn how to start integrating environmental, social, and governance principles into your MLOps pipeline to turn regulatory compliance into a competitive advantage.

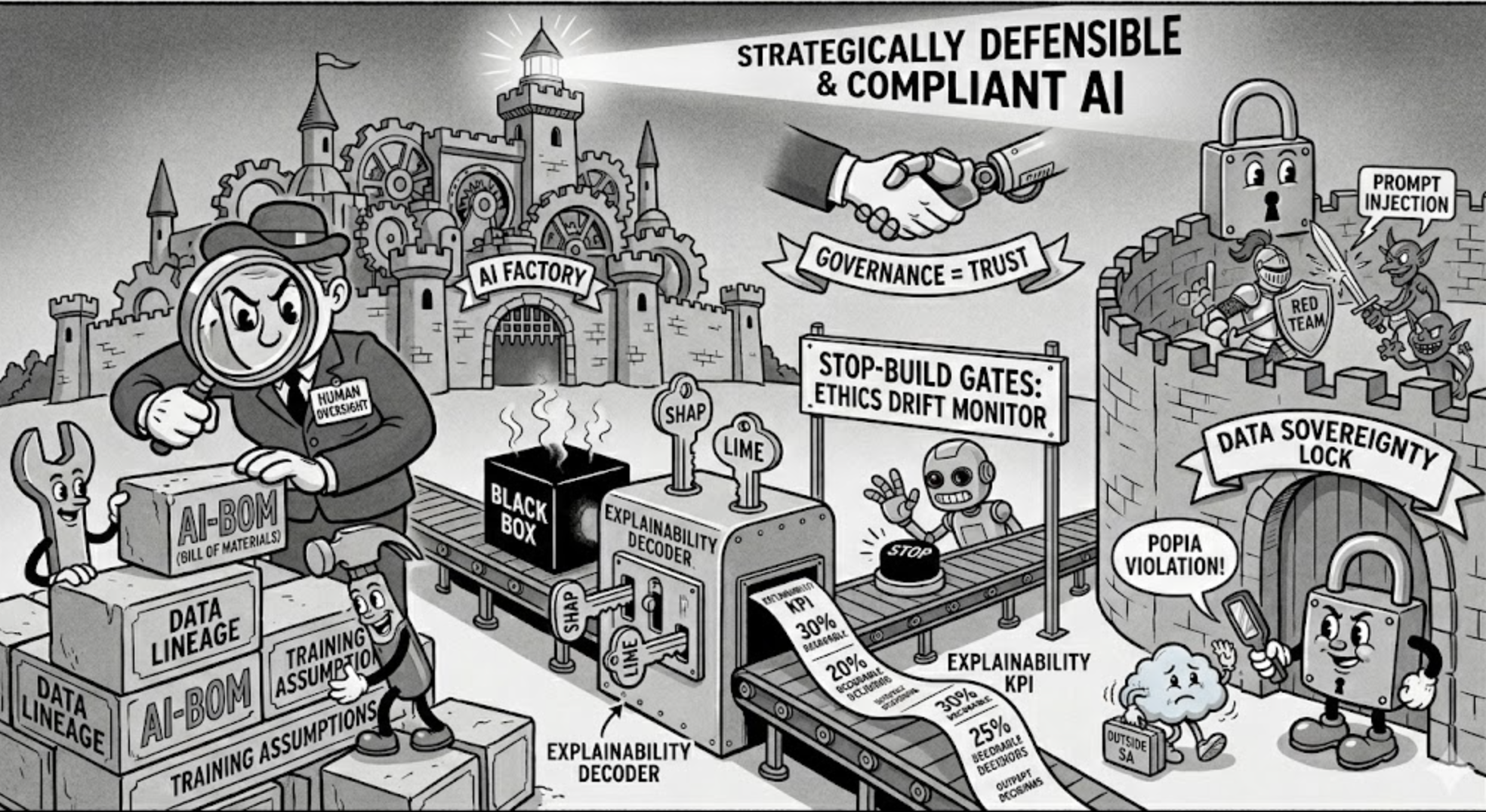

Including Measurable ESG into Your AI CI/CD Pipeline (Photo by Gemini-Nano-Banana).

Your MLOps Lifecycle: The ESG Time Bomb

Treating AI and ESG as separate priorities has made staying compliant nearly impossible over the last few years. On the environmental side, Google experienced a 65% surge in carbon emissions, admitting that the energy-hungry demands of its AI data centers are derailing its net-zero goals. In 2023, the global tutoring firm iTutorGroup paid a $365,000 settlement after its hiring AI was caught automatically rejecting every applicant over the age of 55, a textbook case of encoded ageism which fails both on the social and governance requirements.

The real definitive evidence of ESG neglect in the AI era comes from the December 2025 AI Company Data Initiative (AICDI) report. The AICDI report is the world’s largest study on corporate AI adoption which analyses 1,000 companies across 13 sectors. The report found that:

- A staggering 97% of companies failed to consider energy use or carbon footprints when deploying AI.

- 68% of firms didn’t assess the societal impact of their tech beyond the end-user, ignoring the wider ripple effects on labor and broader communities.

- While 76% of AI companies claim management oversight, only 41% actually made their AI policies accessible to the employees building the tech.

This blog aims to translate complex ESG frameworks into the practical language of MLOps, moving past corporate buzzwords to provide actionable ideas on how to start thinking about implementing measurable ESG checks directly into your CI/CD pipeline. The goal is simple: catch unintended consequences before they reach production. To make these insights immediately actionable, each component section mentions managed service options from Azure and AWS with open-source versions for independent implementation, allowing you to select the right toolset for your specific use case. Additionally, with the current gaps in standardised benchmark reporting, we hope that this blog post will serve as the initial step towards agreed upon benchmarks for the MLops industry in South Africa (and possibly beyond). We invite you to join the conversation and help shape these emerging standards by completing an anonymous survey on where your business currently sits within the ESG for AI reporting.

Why ESG for AI Matters in South Africa

Legal Mandates

While South Africa awaits a formal National AI Act, the Governance and Social pillars of ESG existing statutes have already created a de facto regulatory net that can penalise unmanaged AI systems. AI adoption directly intersects with constitutional rights to equality and privacy, while POPIA protects consumers data rights restricting cross border data transfers and mandating data accuracy and privacy obligations. Similarly, the Consumer Protection Act (CPA)requires that automated decisions, ranging from bank loans to social grants, remain fair, reasonable, accountable and transparent. In fact for financial institutions, the Financial Sector Conduct Authority (FSCA) mandates on algorithmic transparency make it clear that unmonitored models can present a direct threat to your license to operate.

Defined Metrics and Roadmap for Tradeoffs

The prospect of these repercussions may sound intimidating, but implementing quantifiable ESG metrics within your AI and MLOps ecosystems ensures your products remain viable and trusted by your consumers. Despite South Africa’s reporting requirements currently lacking the granular rigidity of the EU’s safety-aligned legislation, there is no mistaking that the tide is turning. We are witnessing a decisive shift from “optional” to “obligatory”. However, viewing this transition solely through the lens of mere compliance misses the bigger picture: a strategic commitment to ESG goals serves as a powerful “win-win” for long-term business resilience. Even in cases where objectives seem to clash, such as the inherent tension between pushing for higher model accuracy and reducing CO2 emissions, integrating ESG into your MLOps provides a clear, data-driven framework. This framework allows you to navigate these technical trade-offs with precision, ensuring you don’t hit regulatory or financial hardships while chasing performance.

Business Case for ESG Framework for AI

In the South African context, the business case for an ESG AI framework rests on consumers transforming ethical overhead into a distinct competitive advantage for your business. The financial upside of ESG compliant companies has become impossible to ignore, as evidenced by market shifts. Take OpenAI vs Anthropic: when OpenAI’s partnership with the U.S. Department of War was perceived as a compromise on surveillance ethics and social standards, it triggered a staggering 295% surge in ChatGPT uninstalls, [a staggering 295% surge in ChatGPT uninstalls], while Anthropic’s Claude surged to No. 1 on the App Store by doubling down on its safety-first principles.

Beyond reputation, robust governance is also the only way to cut through the noise of AI greenwashing. As investors enter a mature stage of scrutiny, AI for Good is being replaced by demands for operational integrity and stress-tested climate pathways. In South Africa’s resource-constrained landscape, vague ambitions will not remain sufficient much longer. Investors are likely to start requiring audit-proof, location-based data to verify real-world emissions reductions. Without this transparency, firms risk divestment as capital markets increasingly penalise companies that fail to reconcile AI-driven expansion with binding energy and water constraints.

Unmanaged and Unmonitored AI Systems Can Lead to Legal Trouble (Photo by Gemini-Nano-Banana).

Suggested ESG for AI Framework

The current landscape of ESG reporting for AI is transitioning from voluntary ethical frameworks to mandatory, high-stakes compliance, yet it remains fragmented by a significant reporting divide. At an international level, the EU AI Act serves as the most complete regulatory anchor, mandating that providers of General Purpose AI maintain rigorous technical documentation on energy consumption and adhere to high levels of transparency for high-risk applications. These requirements are increasingly aligned with international baselines like the ISO/IEC 21031:2024 standard, which formally adopts the Green Software Foundation’s Software Carbon Intensity (SCI) score, and the OECD AI Principles (2024 Revision), which emphasises human agency and inclusive growth.

Despite these advancements, a critical reporting gap persists: many organisations mistakenly rely on the green credentials of hyperscale data centers (such as carbon-neutral infrastructure) as a sufficient proxy for their own AI’s environmental impact. This is not sufficient, as these high-level data center metrics often fail to account for the specific, granular energy intensity of model training and inference (the actual carbon cost of the software logic itself) as outlined in the Green Software Foundation’s SCI score. Furthermore, solely relying on hyperscale data centers for an AI ESG compliance, leaves businesses vulnerable to regulatory fines for incomplete documentation, greenwashing accusations and social blind spots which leave businesses without the transparency to audit for local bias or true sustainability. While there are some formal environmental metrics to provide a technical baseline, the social and governance dimensions still lack a globally standardised baseline equivalent for reporting. To start to bridge these gaps, we provide an options in both managed service and open-source for internal development teams to self-audit.

Mind the Voluntary Ethics Gap (Photo by Gemini-Nano-Banana).

Pillar I: Environmental Accountability

Achieving environmental compliance in AI can be practiced across every stage of the machine learning lifecycle to minimise its physical footprint. This begins with a shift in mindset, where sustainability is treated as a primary success metric alongside accuracy. Since cost savings is a good proxy for sustainability, this approach could also be better for the businesses’s profit margins. By adopting the Software Carbon Intensity (SCI) score, organisations can quantify their impact by blending direct emissions data with resource scalability.

Pragmatically, this means moving away from ‘always-on’ infrastructure in favour of serverless architectures and aggressive auto-termination policies that kill ‘ghost workloads’ or idle clusters. From a technical standpoint, engineers can significantly lower their footprint by utilising pre-trained models to avoid the massive energy cost of training from scratch, while balancing with techniques like weight quantisation and pruning, to ensure that models require fewer computational cycles during inference. Additionally, training can be optimised with retraining triggers when there is a drop in performance, rather than having the models set to a scheduled time based retraining schedule.

Beyond architecture, storage hygiene is critical. This can be achieved by managing data lifecycles to shift unused artefacts to low-energy cold storage which can significantly reduce the energy overhead of “hot” data. Furthermore, by utilising batch transform jobs to maximise hardware saturation, organisations can ensure their infrastructure operates at peak efficiency, extracting the highest possible value from their computational investment.

| Intervention | Possible Implementation | Quantification Suggestion | Managed Service Options | Open Source Option |

|---|---|---|---|---|

| Standardised Impact Measurement | Establishing a unified methodology for carbon accounting at the product/project level across the AI lifecycle. | Software Carbon Intensity (SCI) score; grams of CO2 per inference/requests/tokens. | Azure: Carbon Optimization AWS: Customer Carbon Footprint Tool | CodeCarbon or CarbonTracker |

| Idle Resource Management | Automatically terminating compute instances or notebooks that are active but not processing work. | Ratio of active vs. idle compute hours; compute efficiency ratio; idle power intensity. | Azure: Auto-shutdown (VMs) & ML Idle Shutdown AWS: SageMaker Idle Shutdown | CCF / Kepler (CNCF) |

| Architecture & Training Optimisation | Prioritising pre-trained models and AI services; optimising retraining to occur only on performance degradation. | accuracy-to-energy ratio; % reduction in model parameters; training hours saved. | Azure: Foundry Models & ML Dataset Monitors AWS: Amazon Bedrock | Hugging Face / TFLite |

| Storage Hygiene | Managing data lifecycles to move unused artefacts and large datasets to low-energy cold storage. | Reduction in GB-months of “hot” storage; data duplication rate; storage carbon-efficiency ratio. | Azure: Blob Storage Lifecycle AWS: S3 Intelligent-Tiering | DVC |

| Inference Efficiency | Optimising via weight quantisation/pruning; using Serverless Endpoints or Batch Transform jobs. | Tokens per Watt; latency vs. power consumption (Watts per 1,000 predictions). | Azure: ML Serverless Endpoints AWS: Inferentia (Neuron SDK) | OpenVINO |

Environmental Accountability Can Improve the Efficiency of the ML Lifecycle (Photo by Gemini-Nano-Banana).

Pillar II: Social Responsibility

Socially responsible MLOps focuses on three core pillars: Algorithmic Integrity, Digital Inclusion, and Human Oversight. By proactive auditing for bias against protected characteristics, such as race, gender, and age, using the Disparate Impact Ratio (DIR), organisations can ensure that AI-driven decisions (like loan approvals or job screenings) comply with the currently enforced South African legal frameworks.

However, the social contract of AI extends far beyond fair code. In a landscape where 54% of South African employees fear AI-driven displacement, the discussion must shift from business savings via human replacement to strategic augmentation. Consistent with the UNESCO Recommendation on the Ethics of AI and the 2024 OECD AI Principles (South Africa has not come forward as an adherent as of April 2026) automated systems should not merely eliminate entry-level stepping-stone roles. Instead, responsible deployment should trigger mandatory reskilling ROI pathways. These pathways transition staff from manual tasks into higher-value oversight positions, ensuring that the workforce evolves alongside the technology rather than being left behind.

For an MLOps-focused firm, this transition begins by utilising O*NET to deconstruct specific roles, such as Junior Data Engineer. By identifying manual tasks, like repetitive data cleaning and file formatting, that are ripe for automation via specialized ETL pipelines, firms can pinpoint exactly where human labor can be elevated. Rather than phasing these workers out, organisations can sponsor credentialed learning paths through private-sector partnerships or NGOs. In this evolved capacity, a former juniour data engineer transitions into an MLOps oversight position, moving from manual data handling to managing automated CI/CD pipelines and monitoring model health. This ensures the workforce matures into high-value roles that oversee the very systems that automated their previous manual burdens.

Additionally, organisations with the requisite ESD funds can sponsor courses through private-sector partnerships, like the Microsoft AI Skilling Initiative, which aims to train one million South Africans by 2026 through credentialed learning paths that move workers from basic digital literacy to advanced AI fluency.

Yet, the commitment to social sustainability does not end with internal reskilling. It must also extend to the end-user experience by ensuring that the fruits of this advanced AI fluency are technically accessible to the broader population. In local markets where high-end hardware is a luxury, we must optimise customer-facing models to run on the legacy and budget-friendly devices common across the South Africa. By utilising techniques like weight quantisation and pruning, model distillation and edge device performance testing, advanced AI services remain accessible to all citizens, regardless of their hardware tier.

Ultimately, this human-centric approach is anchored by the principle of Human Stewardship. Through verifiable Human-in-the-loop (HITL) overrides, we ensure that automated systems remain under meaningful human control. This will be further discussed in the Governance section.

| Intervention | Possible Implementation | Quantification Suggestion | Managed Service Options | Open Source Option |

|---|---|---|---|---|

| Workforce Augmentation | Transitioning staff from routine tasks to AI-oversight roles to prevent displacement. | Internal Mobility Success Rate: % of roles successfully transitioned internally. | Azure: Viva Learning AWS: Skill Builder | Hugging Face Course |

| Algorithmic Fairness | Detecting and mitigating bias against sensitive demographics before deployment. | Disparate Impact Ratio (DIR), counterfactual fairness, equalised odds. | Azure: Responsible AI Dashboard AWS: SageMaker Clarify | Fairlearn / AIF360 |

| Digital Equality | Optimising models for performance on budget/legacy devices common in South Africa. | Performance Parity Ratio (legacy vs. flagship); battery drain per token; latency. | Azure: ML Managed Endpoints AWS: IoT Greengrass | ONNX Runtime / DistillBERT |

| PII/PHI Redaction Scan | Automating detection/redaction of sensitive data (IDs, health records) for POPIA compliance. | Redaction Accuracy Rate: % of sensitive entities correctly identified. | Azure: Azure Language AWS: Amazon Comprehend | Microsoft Presidio |

Social Accountability Ensures AI Systems Serve the Wider Community (Photo by Gemini-Nano-Banana).

Pillar III: Governance & Oversight

AI Governance is evolving into a strategic framework that moves organisations toward fuller transparency for high-stakes applications. Inspired by the EU AI Act’s Article 12, industry best practices now advocate for the maintenance of an immutable AI Bill of Materials (AI-BOM). By documenting data lineage, training assumptions, and human oversight, this guideline helps shift AI from an opaque ‘black box’ to a transparent system where explainability serves as a vital key performance indicator. This metric represents the percentage of decisions that can be mathematically decoded using tools like SHAP or LIME, providing a benchmark for transparency that is both measurable and auditable.

To operationalise these standards within the MLOps lifecycle, organisations should integrate automated ‘Stop-Build’ Gates and real-time monitors for ethics drift. When governance is treated as a native part of the development process through Automated Model Cards, it ensures that decisions are explainable, contestable, and strategically defensible. This is bolstered by Adversarial Red Teaming, which protects the model against security vulnerabilities like prompt injection. Additionally, South African organisations must embed geographic accountability directly into their technical workflows. This includes a mandatory Data Sovereignty Check to ensure that all training data is stored within required geographic boundaries, specifically verifying that cloud-hosted data residency is locked to South Africa or other sanctioned regions. By enforcing these local residency locks, firms remain compliant with POPIA’s transborder flow restrictions while ensuring that sensitive citizen data never leaves the sovereign protection of the Republic or sanctioned regions.

| Intervention | Possible Implementation | Quantification Suggestion | Managed Service Options | Open Source Option |

|---|---|---|---|---|

| Immutable Data Lineage | Maintaining a Lineage of Truth to prevent data poisoning and ensure auditability. | Metadata completeness rate; traceability index. | Azure: Data Lake Storage AWS: Glue Data Catalog & Lake Formation | Delta Lake / DVC |

| Automated Model Cards | Generating AI-BOM artefacts documenting training and ethical audits. | Audit readiness rate (Validated AI-BOMs vs. total models). | Azure: Responsible AI Scorecard AWS: SageMaker Model Cards | Model Card Toolkit |

| Explainability | Providing human-readable reports on features influencing specific AI outputs. | Explainability coverage (% of SHAP/LIME reports) | Azure: ML Interpretability AWS: SageMaker Clarify | Evidently AI |

| Ethics Drift Monitoring | Tracking if fairness/accuracy metrics degrade as real-world data evolves. | Drift variance (alert if metrics deviate by >5% from baseline). | Azure: Responsible AI Scorecard AWS: SageMaker Model Monitor | Evidently AI / Alibi Detect |

| Human Approval (HITL) | Mandatory manual sign-off for models exceeding “Impact Thresholds.” | Override frequency; Reviewer decisions/hr. | Azure: ML Approval Gates AWS: Augmented AI (A2I) | Label Studio |

| Adversarial Red Teaming | Testing for Prompt Injection or Model Inversion to prevent leakage. | Security resilience ratio; guardrail latency. | Azure: AI Red Teaming Toolkit AWS: Bedrock Guardrails | PyRIT |

Implementing Good Governance Ensures Your Organisation is Safe Guarded (Photo by Gemini-Nano-Banana).

Conclusion

The transition to actively employing AI ESG mandates requires a fundamental shift in how we build and deploy technology. As we have explored, relying on the broad-stroke green initiatives of cloud providers is not a sufficient defense against the granular requirements of the EU AI Act or South Africa’s evolving financial and privacy regulations or the expected demands of the South African AI Act. By integrating environmental tracking, social fairness audits, and automated governance directly into the MLOps pipeline, South African firms can transform what is often seen as a compliance tax into a robust framework for long-term business resilience and consumer trust.

However, the path forward cannot be walked in isolation. While the technical tools for both managed and open-source implementations exist, the industry currently lacks a unified benchmark for what constitutes compliant performance in the local context. We believe that establishing these formal baselines is a collective responsibility that requires cross-sector collaboration between engineers, legal experts, and policymakers. We must move beyond AI for Good as a marketing slogan and toward AI for Accountability as a professional standard, ensuring that our digital future remains as sustainable as it is innovative.

Similar Resources

- Beyond the Hype: What ESG Leaders Need to Know About AI – An insightful exploration of how ESG leaders can navigate the dual nature of AI, balancing its significant risks with its potential for sustainability innovation.

- AI and ESG: Busting the Myths – A practical guide that debunks common misconceptions surrounding the intersection of Artificial Intelligence and corporate social responsibility, focusing on how HR and ESG teams can work together.

- Integrating ESG into AI Governance Frameworks – Addresses the inherent challenges of aligning rapid AI development with sustainability goals and the necessity of embedding ESG metrics into formal governance structures.

- Businesses Failing to Assess Environmental Impact of AI – A report highlighting a critical gap in current business practices, revealing that only a small fraction of companies are actively measuring the environmental footprint of their AI systems.

. . .

Thanks for reading. If you want to know more about cloud-native tech and machine learning deployments, email us at poke@melio.ai.