South Africa AI Policy Withdrawal: A Wake-Up Call for Designed Accountability

The incident is a warning to organisations that reliability comes from guardrails, traceability, and human-in-the-loop checks - and that none of those things happen by accident.

Context: From Policy Debate to Policy Withdrawal

A few weeks ago, we shared our take on South Africa’s proposed AI policy after an internal Lunch & Learn. At the time, most of the debate centred on ambition versus execution and whether the country could realistically deliver on what was being proposed.

❗️️️️❗️️️️❗️️️️ Then the draft was withdrawn ❗❗️️️️❗️️️️️️️️

This was not because of disagreement on direction, but because the document itself contained fabricated references that appear to have been generated by AI and not properly verified. (SAnews)

That detail changes the conversation, because what looked like a policy gap is now a real-world failure case. This didn’t fail at the policy level. It failed at the system level.

The system used to produce the policy generated plausible-sounding citations, no one validated them, and the error made it all the way into a national document. The minister’s response was blunt: “this wasn’t a minor issue, it compromised the credibility of the policy itself.” (SAnews)

Why This Pattern Keeps Showing Up

The irony is worth sitting with: the policy was trying to introduce governance (ethics boards, oversight, accountability) while the process used to create it had none of those things applied to itself.

That’s not a coincidence. It’s the same gap you see in teams building AI systems. Nothing malicious or dramatic. Just: AI output was used, no one had a defined step to check it, and it moved forward as if it were true.

Reuters called this out directly as a lesson in why human oversight over AI is critical. (Reuters)

The Real Gap Is Accountability

But “human oversight” is where most teams get vague.

The failure here wasn’t a model problem. The model generated plausible-sounding references - that’s exactly what it’s designed to do. The failure was that no one owned the step of verifying them.

Three things were missing:

- No explicit step to verify references

- No traceability of where claims came from

- No clear ownership of validation before publication

That’s not an edge case, but a design gap.

You see the same thing in enterprise systems:

💡 A document pipeline hits 90%+ accuracy in testing, but there’s no rule for what happens at the 10% that fails - so errors slip through quietly.

💡 A support chatbot performs well in most cases, but hallucinations still reach customers unnoticed.

In both cases, the issue isn’t the model. It’s that no one defined who is responsible for the output before it reaches users.

What a System With Accountability Looks Like

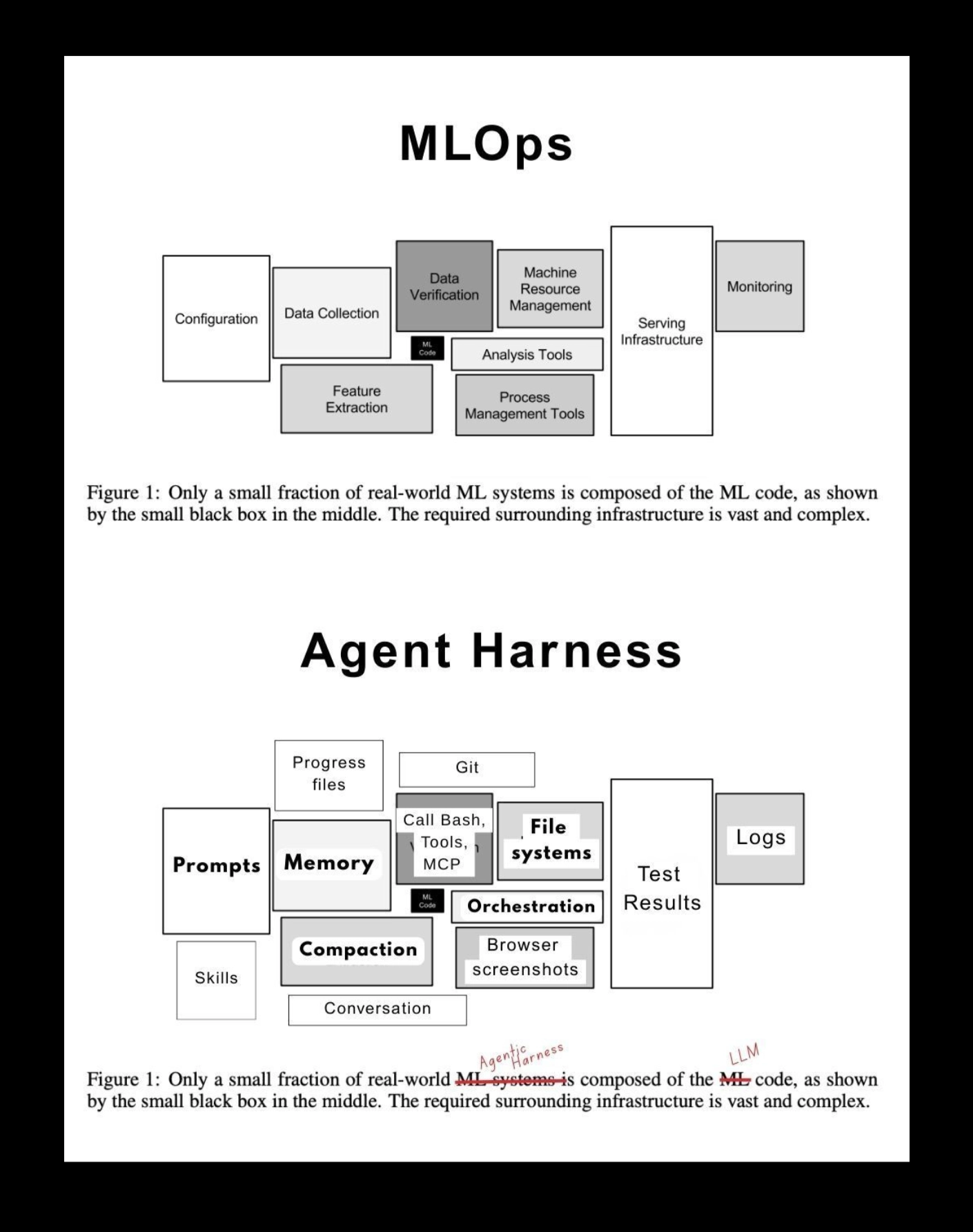

This is why AI delivery has more in common with MLOps than it might first appear.

Credit goes to Demetrios Brinkmann for this image.

In MLOps, the model was always a small part of the system. The real complexity sat in everything around it: data pipelines, validation, serving, and monitoring.

The same is true for AI agents. The model generates the output, but the system determines whether it can be trusted:

- Context and retrieval shape what the AI sees

- Memory and state determine what persists across user interactions

- Prompts, orchestration and tools shape what the AI does

- Permissions and human-in-the-loop define what the system is allowed to do, and when a human steps in

- Evaluation determines whether the output is correct

- Guardrails decide what is allowed to reach users

- Logs and observability make the system traceable

- Versioning tracks what actually produced each output

- Fallbacks and failure handling define what happens when the system breaks

And that’s before cost, latency, and data quality even enter the picture - which is where many AI systems stall after testing.

Where the System Broke

That’s what happened here: hallucinated outputs passed through the system unchecked and were accepted as fact.

The model produced plausible references, but there was no evaluation step to verify them, no traceability to show where they came from, and no guardrails or human checkpoint to stop them from being published.

So the system treated the output as valid, and it moved forward as if it were true.

This is where human-in-the-loop stops being a concept and becomes a design decision.

Not “someone reviews it at some point,” but:

- What exactly needs to be verified?

- At what threshold does a human step in?

- Who is accountable for that decision?

In this case, the control was simple: validate every reference before publication. Not skim the document or review tone, but check whether the sources actually exist.

That step didn’t exist, and the entire policy collapsed because of it.

Why This Matters Now

The withdrawal, in that sense, is less of a setback and more of a very public stress test.

South Africa is still trying to do something difficult, encourage AI adoption while putting guardrails in place early. That hasn’t changed. If anything, this incident accelerates the need to get it right, because it shows how quickly credibility breaks when systems are not designed for verification and accountability.

For companies building AI, the implication is practical.

You don’t need to wait for a revised policy to know what’s coming:

- Outputs will need to be justified

- Decisions will need to be traceable

- Low-confidence cases will need to be handled explicitly

And most of that sits in system design.

If anything, this is the cleanest example of the shift we’ve been talking about:

AI doesn’t fail loudly. It fails slowly and silently, in ways that look correct until someone checks.

The question is whether your system is built to catch that moment, or whether, like this policy, it only becomes visible once it’s already too late.

. . .

Articles